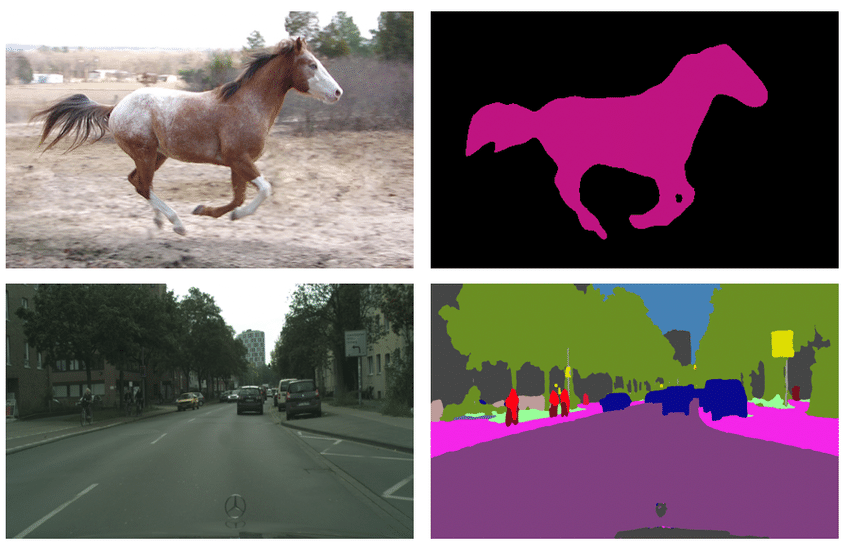

Semantic Segmentation#

Semantic segmentation is a computer vision task in which an algorithm assigns a label or class to each pixel in an image. For example, semantic segmentation can be used to identify the boundaries of different objects in an image, such as cars, buildings, and trees. The output of semantic segmentation is typically an image where each pixel is colored with a different color or label depending on its class.

We solve this task by utilizing segmentation decoder heads on the multi-level image features obtained by the feature extractor backbone. For the supervised training we use the following algorithms components:

Augmentations: Besides basic augmentations like random flip, random rotate and random crop, we use mixing images technique with different photometric distortions.Optimizer: We use Adam and AdamW <https://arxiv.org/abs/1711.05101> optimizers.Learning rate schedule: For scheduling training process we use ReduceLROnPlateau with linear learning rate warmup for 100 iterations for Lite-HRNet family. This method monitors a target metric (in our case we use metric on the validation set) and if no improvement is seen for apatiencenumber of epochs, the learning rate is reduced.For SegNext and DinoV2 models we use PolynomialLR scheduler.

Loss function: We use standard Cross Entropy Loss to train a model.Additional training techniquesEarly stopping: To add adaptability to the training pipeline and prevent overfitting.

Dataset Format#

For the dataset handling inside OpenVINO™ Training Extensions, we use Dataset Management Framework (Datumaro).

At this end we support Common Semantic Segmentation data format. If you organized supported dataset format, starting training will be very simple. We just need to pass a path to the root folder and desired model recipe to start training:

Models#

We support the following ready-to-use model recipes:

Recipe Path |

Complexity (GFLOPs) |

Model size (M) |

FPS (GPU) |

iter time (sec) |

|---|---|---|---|---|

1.44 |

0.82 |

37.68 |

0.151 |

|

2.63 |

1.10 |

31.17 |

0.176 |

|

9.20 |

1.50 |

15.07 |

0.347 |

|

12.44 |

4.23 |

104.90 |

0.126 |

|

30.93 |

13.90 |

85.67 |

0.134 |

|

64.65 |

27.56 |

61.91 |

0.215 |

|

124.01 |

24.40 |

3.52 |

0.116 |

All of these models differ in the trade-off between accuracy and inference/training speed. For example, SegNext_B is the recipe with heavy-size architecture for more accurate predictions, but it requires longer training.

Whereas the Lite-HRNet-s-mod2 is the lightweight architecture for fast inference and training. It is the best choice for the scenario of a limited amount of data. The Lite-HRNet-18-mod2 and SegNext_S models are the middle-sized architectures for the balance between fast inference and training time.

DinoV2 is the state-of-the-art model producing universal features suitable for all image-level and pixel-level visual tasks. This model doesn’t require fine-tuning of the whole backbone, but only segmentation decode head. Because of that, it provides faster training preserving high accuracy.

In the table below the Dice score on some academic datasets using our supervised pipeline is presented. We use 512x512 (560x560 fot DinoV2) image crop resolution, for other hyperparameters, please, refer to the related recipe. We trained each model with single Nvidia GeForce RTX3090.

Model name |

Mean |

||||

|---|---|---|---|---|---|

Lite-HRNet-s-mod2 |

78.73 |

69.25 |

63.26 |

41.73 |

63.24 |

Lite-HRNet-18-mod2 |

81.43 |

72.66 |

62.10 |

46.73 |

65.73 |

Lite-HRNet-x-mod3 |

82.36 |

74.57 |

59.55 |

49.97 |

66.61 |

SegNext-t |

83.99 |

77.09 |

84.05 |

48.99 |

73.53 |

SegNext-s |

85.54 |

79.45 |

86.00 |

52.19 |

75.80 |

SegNext-b |

86.76 |

76.14 |

87.92 |

57.73 |

77.14 |

DinoV2 |

84.87 |

73.58 |

88.15 |

65.91 |

78.13 |

Note

Please, refer to our dedicated tutorial for more information on how to train, validate and optimize the semantic segmentation model.